Why LLM-Assisted Validation Requires Clean Metadata

LLM-assisted validation can improve B2B data accuracy, but only when metadata is structured correctly. Learn why clean metadata is essential for reliable AI-driven validation systems.

INDUSTRY INSIGHTSLEAD QUALITY & DATA ACCURACYOUTBOUND STRATEGYB2B DATA STRATEGY

CapLeads Team

3/6/20264 min read

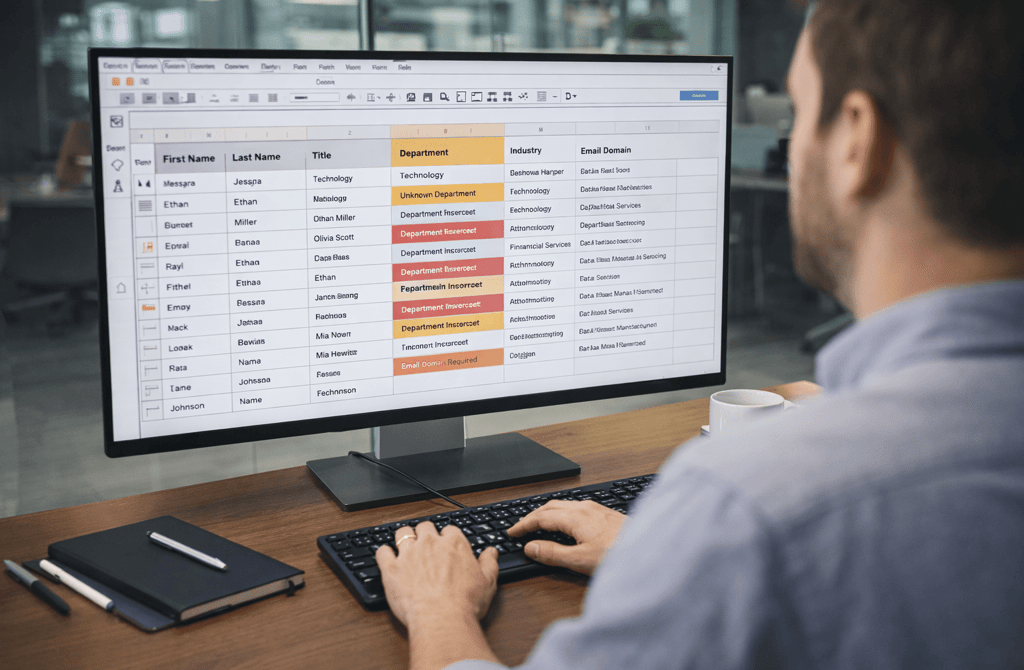

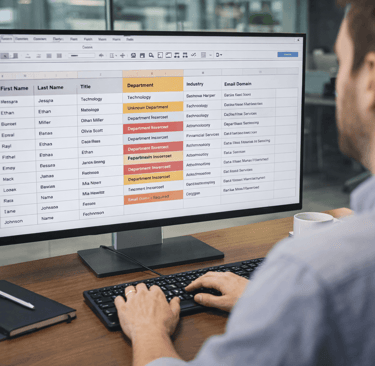

Large language models are increasingly used inside data operations. Teams use them to check job titles, infer departments, verify company descriptions, and even flag suspicious contact records. The promise is appealing: an intelligent system that can interpret messy data and correct errors automatically.

But there is a common misconception behind that promise.

LLMs do not magically fix disorganized datasets. In fact, when metadata is inconsistent or poorly structured, the model often produces unstable conclusions. Instead of clarifying records, the system begins guessing.

This is why teams integrating LLM-assisted validation quickly discover that metadata quality determines whether the model improves accuracy or introduces new uncertainty.

Metadata Is the Language AI Reads

For humans, messy data can sometimes be interpreted through context. If a job title says “Head of Rev,” an experienced operator may still understand that the role refers to revenue leadership.

Models don’t work the same way.

LLMs interpret meaning through patterns in structured fields. The clearer those fields are, the easier it becomes for the model to interpret the information. When the metadata structure is inconsistent, the model struggles to determine what the record actually represents.

For example, a title field might contain variations such as:

VP Sales

Vice President – Sales

Sales Leadership

Revenue Growth Lead

To a human, these look related. But if metadata standards are inconsistent across thousands of records, the model receives mixed signals about what those roles represent.

Validation Requires Contextual Clarity

LLM-assisted validation often works by comparing metadata fields against one another. The model evaluates whether combinations of information make sense together.

If a contact record shows:

Job Title: Chief Financial Officer

Department: Marketing

the model may flag the record because the relationship between those fields appears inconsistent.

But this type of reasoning only works when the metadata itself is reliable. If departments are labeled inconsistently or fields are missing entirely, the model cannot determine whether the relationship is correct.

Instead of validating the data, the LLM becomes forced to infer context that should already exist in the dataset.

Field Structure Matters More Than Volume

Another mistake teams make when implementing LLM validation is assuming that more data automatically improves results.

In reality, the structure of the metadata matters more than the quantity of records.

A dataset with clear column definitions—job titles, departments, industries, company size—gives the model stable reference points. Each field becomes a signal the model can evaluate against other fields.

But when those fields contain ambiguous entries such as “unknown,” “general management,” or inconsistent abbreviations, the system loses its ability to reason about the relationships inside the record.

This is why LLM validation works best when metadata standards are enforced before the model processes the dataset.

Clean Metadata Enables Cross-Field Reasoning

One of the strengths of LLM-assisted validation is its ability to analyze relationships across multiple fields simultaneously.

For instance, the model may examine how job titles correlate with company size or how departments align with specific industries. These relationships help the system determine whether a contact record is plausible.

This type of reasoning becomes especially valuable when working with Cybersecurity companies for B2B outreach, where role structures often include specialized titles such as security architects, threat analysts, and compliance leads. Without clean metadata, the model may misinterpret these roles or incorrectly group them into unrelated departments.

Structured metadata ensures that LLMs interpret these specialized roles correctly rather than flattening them into generic categories.

Messy Metadata Creates False Signals

When metadata quality degrades, LLM-assisted validation begins producing misleading outputs.

The system may flag legitimate records as suspicious because field relationships appear inconsistent. At the same time, incorrect records may pass validation simply because the model cannot detect the underlying problem.

This creates a dangerous illusion: the dataset appears validated, but the signals driving the validation are unstable.

Over time, these hidden inaccuracies compound across larger datasets. The validation layer that was supposed to improve accuracy becomes another source of noise.

What LLM Validation Actually Depends On

The effectiveness of LLM-assisted validation depends on three foundational elements:

Consistent field definitions

Standardized metadata values

Reliable relationships between fields

When these elements exist, LLMs can analyze patterns across large datasets and identify anomalies that would be difficult for humans to detect manually.

Without them, the model spends most of its effort trying to interpret ambiguous metadata rather than validating meaningful signals.

The Real Role of LLMs in Data Validation

LLMs are powerful reasoning systems, but they are not replacements for structured data practices. Instead, they act as an analytical layer that sits on top of well-organized datasets.

When metadata is clean and consistent, the model can evaluate relationships, detect inconsistencies, and flag anomalies with impressive accuracy.

When metadata is chaotic, the model is forced to guess.

Bottom Line

LLM-assisted validation works best when metadata provides a stable structure for interpretation. Clean fields allow the model to analyze relationships across records instead of trying to decode ambiguous inputs.

The model’s intelligence becomes useful only when the dataset speaks a clear language.

When metadata is structured carefully, validation systems become far more reliable.

When metadata is inconsistent, even advanced AI struggles to separate real signals from noise.

Related Post:

Why CRM Metadata Conflicts Corrupt Segmentation

The Lifecycle Management Mistakes That Block Deals

How Scoring Drift Creates False High-Priority Leads

Why Strong Scoring Depends on Field Completeness

The Multi-Signal Scoring Framework That Actually Works

How Inconsistent Metadata Breaks Your Segmentation Logic

Why Metadata Drift Happens Inside Large Lead Lists

The Contact-Level Clues Buried Inside Metadata Fields

How Company Expansion Alters Contact Accuracy

Why Lifecycle Drift Skews Segmentation Over Time

The Stage-Based Patterns That Predict Reply Probability

How Source Diversity Boosts Lead Accuracy at Scale

Why Multi-Source Data Requires Stricter Deduplication

The Blending Rules That Prevent Data Integrity Loss

How Bad Data Bloats Sending Volume With No Returns

Why SDR Teams Burn Out When Lead Data Is Faulty

The Compounding Waste Caused by Outdated Lead Lists

How Data Drift Disrupts Revenue Decision-Making

Why Revenue Systems Require Continuous Data Validation

The Data Signals That Reveal Structural Revenue Weakness

How Over-Automation Creates Pipeline Noise

Why SDR Judgment Beats Automation in High-Stakes Accounts

The Blind Spots Inside Automation-First Outbound Systems

How System-Level Data Drift Derails Reliable Email Sending

Why Modern Outbound Systems Rely on Data Interconnectivity

The Data Dependencies Most Founders Never See

How Industry Turnover Drives Bounce Rate Differences

Why High-Churn Markets Produce Unstable Email Data

The Bounce Risk Patterns Hidden Inside Each Industry

How Industry-Specific Roles Influence Email Behavior

Why Certain Verticals Prefer Multi-Step Messaging

The Outbound Timing Patterns Hidden Inside Each Industry

How Sector Stability Predicts Long-Term Data Freshness

Why Fast-Decay Verticals Require More Frequent Validation

The Industry-Level Signals That Reveal Accelerated Data Aging

Why AI Becomes Unreliable With Aged Lead Lists

The AI Pipeline Behind Modern B2B Data Processing

Connect

Get verified leads that drive real results for your business today.

www.capleads.org

© 2025. All rights reserved.

Serving clients worldwide.

CapLeads provides verified B2B datasets with accurate contacts and direct phone numbers. Our data helps startups and sales teams reach C-level executives in FinTech, SaaS, Consulting, and other industries.