Why Strong Scoring Depends on Field Completeness

Lead scoring accuracy collapses when critical fields are missing. Learn why complete firmographic and role data determines true prioritization strength.

INDUSTRY INSIGHTSLEAD QUALITY & DATA ACCURACYOUTBOUND STRATEGYB2B DATA STRATEGY

CapLeads Team

2/23/20263 min read

A scoring model doesn’t break loudly.

It drifts quietly the moment it starts compensating for missing information.

Most teams think weak scoring comes from bad weighting logic. They tweak percentages. They rebalance fit vs intent. They adjust thresholds.

But in reality, scoring strength collapses much earlier — at the field level.

When critical fields are incomplete, your model doesn’t just lose precision. It starts inventing confidence.

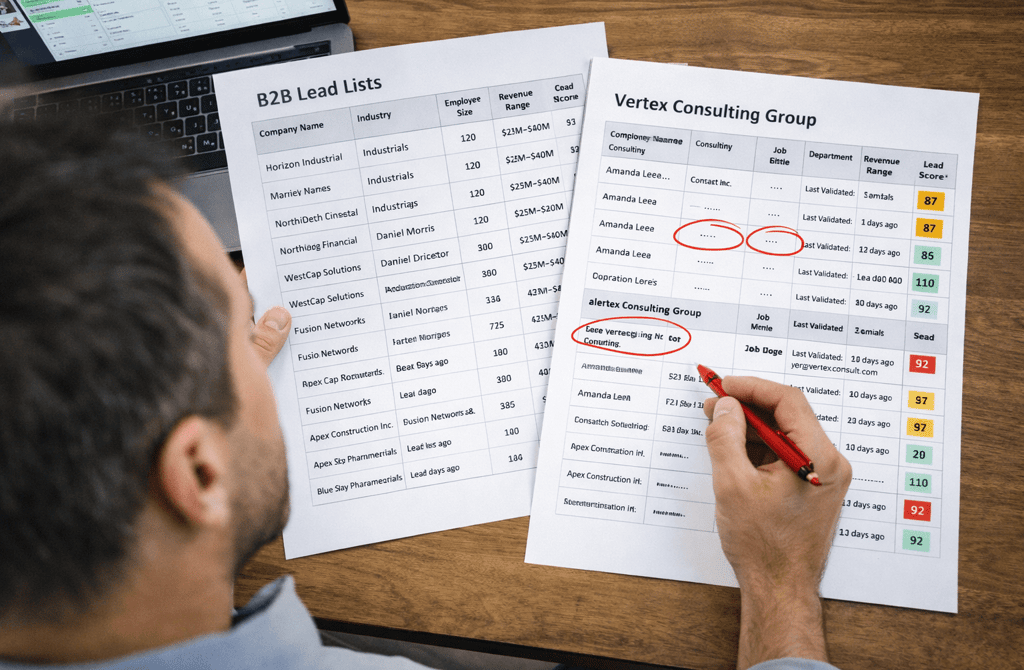

The Illusion of a “Strong” Score

A lead score is nothing more than a weighted interpretation of available fields.

Company size.

Revenue range.

Industry classification.

Department.

Seniority.

Recency.

Engagement.

If those fields are complete and accurate, the score reflects structured probability.

If half of those fields are missing, the score becomes an overconfident guess.

The danger is subtle. Your dashboard still shows a number. Your prioritization queue still fills up. Your SDR team still sees ranked accounts.

But the absence of foundational data forces the scoring system to lean heavily on whatever fields remain populated — often engagement or partial firmographics.

That’s how inflated prioritization begins.

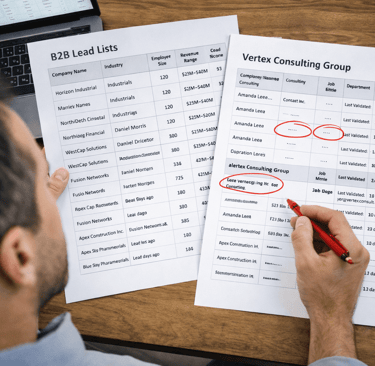

How Missing Fields Distort Scoring Logic

Field incompleteness doesn’t weaken scoring evenly. It creates directional bias.

1️⃣ Company Size Gaps

If employee size is blank, your scoring model cannot correctly assess buying capacity. Instead, it leans on industry or engagement.

This overweights short-term behavior and underweights structural fit.

Now you’re prioritizing responsive small accounts over larger, strategically aligned ones.

2️⃣ Revenue Range Missing

Revenue data isn’t just a vanity metric — it defines budget band.

Without it, your model assumes neutrality. But neutrality isn’t neutral. It hides risk.

A $2M consulting firm and a $200M services firm shouldn’t carry the same priority logic.

This is especially true when working with Tech, Media and Telecom industry B2B leads, where revenue scale directly impacts buying authority and project scope. Missing revenue fields can silently flatten meaningful distinctions inside your ICP.

3️⃣ Department and Seniority Blind Spots

When department fields are empty, title parsing becomes fragile.

“Director” in Marketing behaves differently from “Director” in Operations.

If the department is missing, your scoring model inflates generic seniority weight — even when the role is misaligned.

That’s how mid-level operators get scored like economic buyers.

Why Incomplete Fields Create Artificial Strength

Here’s the structural problem:

Scoring systems rarely penalize missing data aggressively enough.

Instead of lowering confidence when key fields are absent, many models simply ignore the missing variable.

The result?

A record with:

No revenue

No department

Outdated validation date

Can still achieve a high score because engagement or industry match remains intact.

The model isn’t wrong.

It’s incomplete.

And incomplete inputs create false positives faster than flawed logic ever will.

The System-Level Impact

Field incompleteness doesn’t just mis-rank leads.

It creates downstream distortions:

High-score tiers show lower-than-expected reply rates

Sales conversations stall due to misaligned authority

ICP refinements appear inconsistent

Lead scoring “feels unreliable”

Teams often respond by rebuilding scoring frameworks.

But the issue isn’t the formula.

It’s the data foundation underneath it.

Field Completeness as a Confidence Multiplier

A strong score isn’t about higher numbers.

It’s about higher signal integrity.

When core fields are consistently populated:

Company size sharpens targeting bands

Revenue filters refine deal probability

Department precision improves message framing

Seniority mapping aligns authority correctly

Recency anchors score validity in time

Completeness creates stability.

Stability creates predictability.

And predictability is what makes prioritization trustworthy.

The Audit Most Teams Skip

If you want to evaluate scoring strength, don’t start with weights.

Start with completeness ratios.

Ask:

What percentage of scored leads have revenue populated?

How many records lack department classification?

How often are validation timestamps missing or outdated?

What proportion of high-score accounts contain at least 80% of core fields?

If top-tier leads are built on partial records, your model is amplifying noise.

Field completeness is not enrichment for personalization.

It is structural reinforcement for prioritization.

Bottom Line

Lead scoring only works when the fields underneath it are structurally sound.

Missing data doesn’t lower confidence visibly — it lowers it invisibly.

A model built on partial records will always misplace urgency.

A model grounded in complete, accurate fields turns prioritization into a measurable advantage.

Related Post:

Why Inconsistent Targeting Raises Spam Filter Suspicion

The Inbox Sorting Logic ESPs Never Explain Publicly

How Risky Sending Patterns Trigger Domain-Level Penalties

Why Domain Reputation Is Built on Consistency, Not Volume

The Hidden Domain Factors That Influence Inbox Placement

Why Copy Tweaks Don’t Fix Underlying Data Problems

The Hidden Data Requirements Behind High-Performing Frameworks

Why Framework Experiments Fail When Lists Aren’t Fresh

How Overly Broad Segments Lower Reply Probability

Why Weak Targeting Logic Confuses Inbox Providers

The Real Cost of Using “Catch-All” Segments in Outbound

How Weak ICP Definitions Inflate Your Pipeline With Noise

Why Buyer Fit Accuracy Matters More Than Industry Fit

The Hidden ICP Mistakes That Make Outreach Unpredictable

How Poor Data Creates Blind Spots in Committee Mapping

Why Buying Committees Prefer Consistent Messaging Across Roles

The Contact Layering Strategy Behind Multi-Threaded Sequences

How Engagement Timing Predicts Buying Motivation

Why Intent Data Works Only When the Inputs Are Clean

The Multi-Signal Indicators Behind Strong Reply Rates

How ICP Precision Improves Reply Rate Fast

Why Bad Data Creates False Low-Reply Signals

The Underestimated Variables Behind Reply Probability

How Data Drift Creates False Confidence in Pipeline Health

Why Incorrect ICP Fit Leads to Dead Pipeline Stages

The Drop-Off Patterns That Reveal Data Quality Problems

How Duplicate CRM Entries Kill Data Reliability

Why CRM Metadata Conflicts Corrupt Segmentation

The Lifecycle Management Mistakes That Block Deals

How Scoring Drift Creates False High-Priority Leads

Connect

Get verified leads that drive real results for your business today.

www.capleads.org

© 2025. All rights reserved.

Serving clients worldwide.

CapLeads provides verified B2B datasets with accurate contacts and direct phone numbers. Our data helps startups and sales teams reach C-level executives in FinTech, SaaS, Consulting, and other industries.