The Blending Rules That Prevent Data Integrity Loss

When multiple datasets are combined without structure, integrity erodes fast. Here are the blending rules that protect field accuracy, resolve conflicts, and prevent silent data corruption.

INDUSTRY INSIGHTSLEAD QUALITY & DATA ACCURACYOUTBOUND STRATEGYB2B DATA STRATEGY

CapLeads Team

2/26/20263 min read

Most data integrity failures don’t start with corruption.

They start with merging.

When teams combine datasets, the intention is enrichment — better coverage, stronger fields, deeper segmentation. But without strict blending rules, integration quietly introduces contradictions that erode structural trust.

Blending is not stacking records together.

It is deciding which truth survives.

If that decision logic isn’t defined upfront, integrity loss becomes gradual and invisible.

Blending Without Hierarchy Creates Silent Overwrites

When two sources disagree, one must win.

If Source A lists a contact as “Director of Operations”

and Source B lists them as “VP Operations,”

a merge process must determine:

Which title is more recent?

Which source has higher authority?

Should both be preserved with timestamps?

Without hierarchy rules, merges often default to:

Last imported value wins

Or first value preserved

Or random overwrite based on system logic

Each of these approaches risks degrading accuracy.

Data blending requires source weighting — not blind merging.

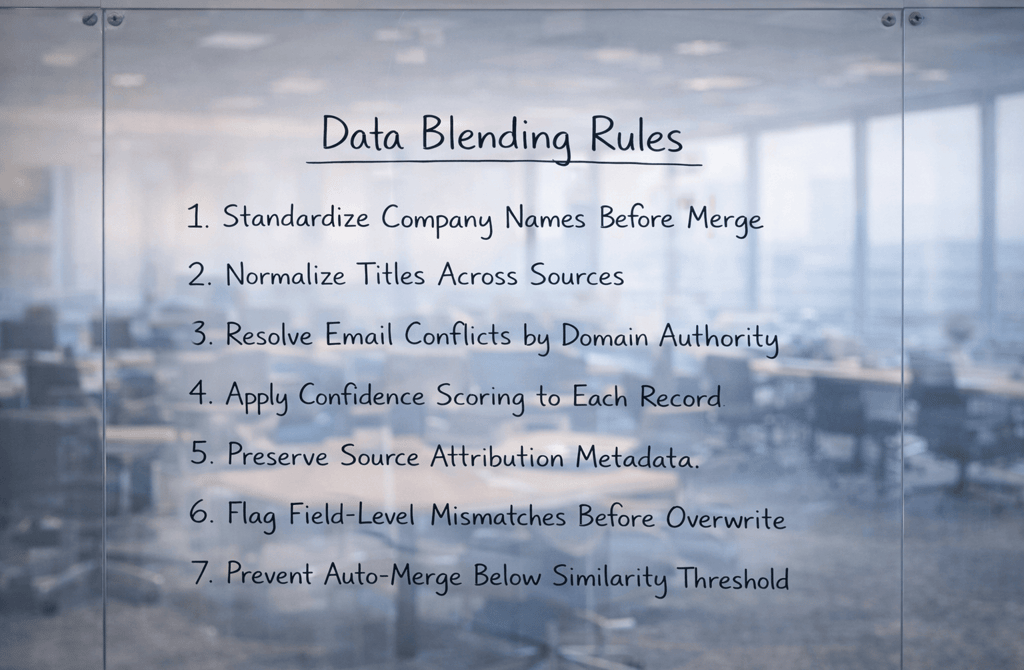

Standardization Must Precede Consolidation

Blending raw fields before normalization multiplies inconsistencies.

Company names, for example, vary widely:

“Acme Ltd.”

“Acme Limited”

“Acme Ltd”

“ACME LTD”

If consolidation occurs before standardization, duplicate entities remain hidden under formatting differences.

Title variation behaves similarly:

“Chief Revenue Officer”

“CRO”

“Head of Revenue”

Blending rules must define:

Canonical company naming structure

Approved title normalization dictionary

Formatting standards across fields

Integrity protection begins before records touch each other.

Field-Level Validation Prevents Cascade Errors

Not all fields should be treated equally during blending.

Some fields tolerate variation:

Phone formatting

Middle initials

Others require strict validation:

Email domain alignment

Company-to-domain mapping

Seniority tier classification

If a blend overwrites a validated corporate email with a lower-confidence alternative, deliverability risk increases immediately.

In industries like Industrials B2B lead targeting, where procurement structures can be rigid and authority lines clearly defined, incorrect seniority blending can shift targeting toward non-decision roles without anyone noticing.

Blending rules must classify fields by sensitivity.

Not every mismatch is equal.

Preserve Source Attribution — Always

One of the most common integrity mistakes is losing source metadata during merge.

When records are blended without preserving origin tags, conflict resolution becomes impossible later.

If a title appears incorrect, you must know:

Which source supplied it

When it was imported

What its original format was

Without attribution, auditing becomes guesswork.

Blending rules should require:

Persistent source tagging

Timestamp preservation

Conflict logs for overwritten fields

Integrity isn’t just about correctness.

It’s about traceability.

Confidence Scoring Should Guide Merge Decisions

Binary merging is outdated logic.

Modern blending should operate on probabilistic confidence:

High-confidence match → merge automatically

Medium-confidence → flag for review

Low-confidence → maintain separation

Confidence scoring should consider:

Name similarity

Email-to-domain validation

Title alignment strength

Blending without scoring increases the chance of false merges — combining two distinct individuals into one record — which is often harder to detect than duplicates.

Integrity loss from over-merging can distort segmentation more severely than leaving duplicates unresolved.

Protect the Core Identity Graph

Every database should maintain a stable identity backbone:

Person ID

Company ID

Verified domain

Blending rules should prioritize protecting this core graph above enrichment fields.

When identity anchors shift due to careless merging, downstream segmentation inherits instability:

Accounts appear merged incorrectly

Contacts shift companies unexpectedly

ICP filters return inconsistent results

The blending layer must respect identity anchors as immutable unless strong evidence supports change.

Blending Is Ongoing, Not One-Time

Data integrity protection is not a single import event.

As new datasets enter the system, blending rules must re-evaluate:

Existing identities

Prior merge decisions

Confidence thresholds

Without continuous enforcement, drift reintroduces conflict.

Every new source increases blending complexity.

Without defined rules, scale magnifies corruption.

What This Means

Blending is the most underestimated integrity risk in multi-source pipelines.

Without structured rules for normalization, hierarchy, conflict resolution, and confidence scoring, merging datasets gradually distorts segmentation accuracy.

When blending logic is defined before integration, datasets strengthen each other.

When merging happens without governance, integrity erodes quietly beneath expanding volume.

Related Post:

Why Weak Targeting Logic Confuses Inbox Providers

The Real Cost of Using “Catch-All” Segments in Outbound

How Weak ICP Definitions Inflate Your Pipeline With Noise

Why Buyer Fit Accuracy Matters More Than Industry Fit

The Hidden ICP Mistakes That Make Outreach Unpredictable

How Poor Data Creates Blind Spots in Committee Mapping

Why Buying Committees Prefer Consistent Messaging Across Roles

The Contact Layering Strategy Behind Multi-Threaded Sequences

How Engagement Timing Predicts Buying Motivation

Why Intent Data Works Only When the Inputs Are Clean

The Multi-Signal Indicators Behind Strong Reply Rates

How ICP Precision Improves Reply Rate Fast

Why Bad Data Creates False Low-Reply Signals

The Underestimated Variables Behind Reply Probability

How Data Drift Creates False Confidence in Pipeline Health

Why Incorrect ICP Fit Leads to Dead Pipeline Stages

The Drop-Off Patterns That Reveal Data Quality Problems

How Duplicate CRM Entries Kill Data Reliability

Why CRM Metadata Conflicts Corrupt Segmentation

The Lifecycle Management Mistakes That Block Deals

How Scoring Drift Creates False High-Priority Leads

Why Strong Scoring Depends on Field Completeness

The Multi-Signal Scoring Framework That Actually Works

How Inconsistent Metadata Breaks Your Segmentation Logic

Why Metadata Drift Happens Inside Large Lead Lists

The Contact-Level Clues Buried Inside Metadata Fields

How Company Expansion Alters Contact Accuracy

Why Lifecycle Drift Skews Segmentation Over Time

The Stage-Based Patterns That Predict Reply Probability

How Source Diversity Boosts Lead Accuracy at Scale

Why Multi-Source Data Requires Stricter Deduplication

Connect

Get verified leads that drive real results for your business today.

www.capleads.org

© 2025. All rights reserved.

Serving clients worldwide.

CapLeads provides verified B2B datasets with accurate contacts and direct phone numbers. Our data helps startups and sales teams reach C-level executives in FinTech, SaaS, Consulting, and other industries.