The Blind Spots Inside Automation-First Outbound Systems

Automation-first outbound systems promise efficiency, but hidden blind spots in data interpretation, signal weighting, and workflow design can quietly distort targeting and revenue forecasting. Here’s where automation misses what humans see.

INDUSTRY INSIGHTSLEAD QUALITY & DATA ACCURACYOUTBOUND STRATEGYB2B DATA STRATEGY

CapLeads Team

3/1/20263 min read

Automation-first systems look impressive from the outside.

Sequences fire automatically.

Leads get scored instantly.

Stages update without manual input.

Dashboards move in real time.

It feels modern. Efficient. Controlled.

But automation-first design introduces a structural risk most teams don’t notice: it assumes that defined logic equals complete visibility.

And that assumption is where blind spots form.

When Logic Replaces Observation

Automation operates on predefined rules.

If:

Job title contains “VP” → increase score

Company size > 200 → route to Enterprise

Website visit ≥ 2 pages → trigger follow-up

Email reply detected → escalate stage

That logic may be technically correct.

But automation doesn’t question whether the underlying data is still accurate, whether the title reflects real authority, or whether the visit indicates research versus curiosity.

It executes conditions.

It does not interpret meaning.

Blind spots form not because automation fails — but because it never pauses to reassess assumptions.

The Drift Between System Rules and Market Reality

Outbound markets evolve faster than automation rules do.

ICP definitions shift.

Buying committees expand.

Titles change.

Departments merge.

Yet automation systems often continue operating on last quarter’s logic.

In sectors like Logistics industry B2B leads, for example, organizational roles can shift quickly due to supply chain restructuring. A “Head of Operations” might now report differently or control a narrower budget scope than six months ago. Automation continues scoring based on the old structure.

The system isn’t broken.

It’s outdated.

That gap creates invisible misrouting and quiet qualification errors.

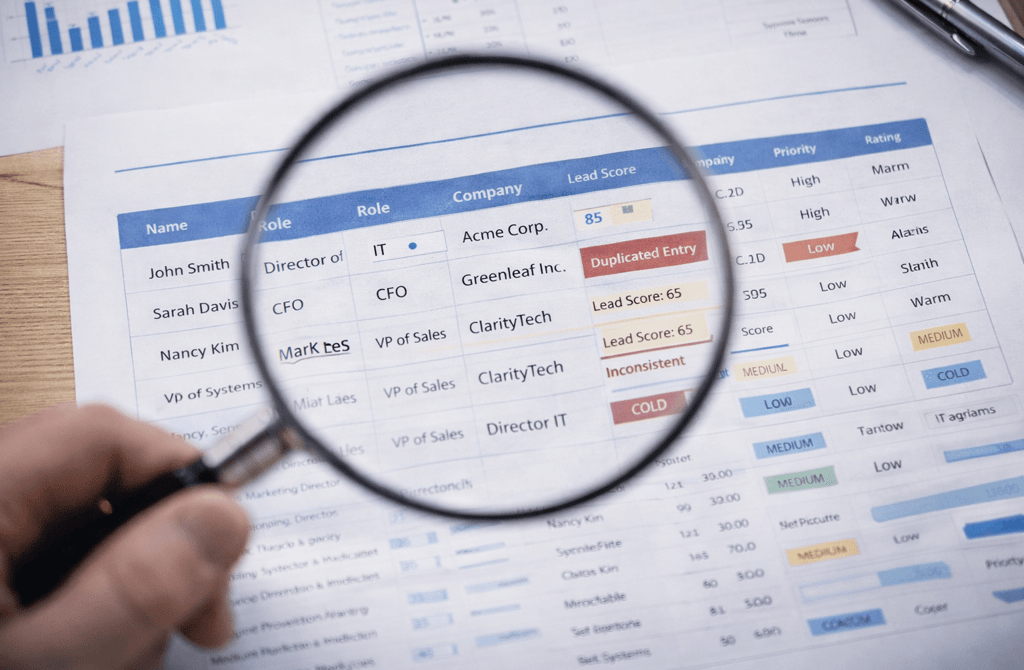

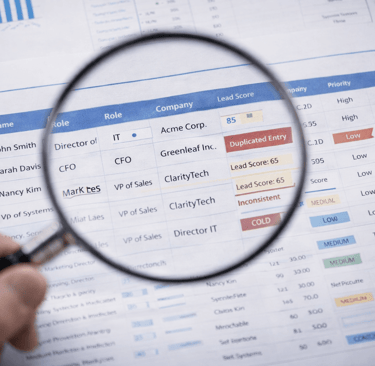

Automation Can’t See Field-Level Inconsistency

Another blind spot lives at the data-field level.

When multiple enrichment tools update CRM fields automatically, conflicts can occur:

Department field overwritten

Company size reclassified

Lead score recalculated without context

Priority flags stacking incorrectly

Automation processes each update independently.

It does not resolve contradictions.

A contact can simultaneously appear:

High priority

Low intent

Enterprise-tier

Low budget

On a dashboard, everything looks active.

Underneath, definitions have drifted.

Overconfidence in Dashboards

Automation-first systems often create visual confidence.

Green indicators.

Rising MQL counts.

Accelerating stage movement.

But dashboards measure outputs of logic — not quality of interpretation.

If scoring thresholds are slightly misaligned, stage automation will amplify that misalignment across hundreds of accounts.

The result isn’t visible failure.

It’s statistical distortion.

Forecasts start to feel less reliable.

Conversion rates become inconsistent.

Pipeline velocity appears healthy but closes unpredictably.

These are symptoms of blind spots, not performance collapse.

Automation Doesn’t Audit Itself

Perhaps the largest structural blind spot:

Automation systems rarely question their own relevance.

Rules accumulate over time:

Legacy triggers remain active

Old segmentation filters still fire

Conditional branches stack on top of each other

Manual overrides become permanent

The system grows more complex.

Visibility decreases.

Without deliberate audits, automation-first outbound becomes rule-heavy and context-light.

Restoring Visibility Without Removing Automation

The solution isn’t abandoning automation.

It’s inserting deliberate human oversight at critical points:

Quarterly rule audits

Field ownership governance

Trigger simplification

Scoring recalibration

Manual review checkpoints before major stage escalations

Automation should accelerate clarity — not replace it.

When systems are periodically examined for drift, blind spots shrink.

When they’re left unchecked, blind spots compound quietly.

Conclusion

Automation-first outbound systems feel precise because they are structured.

But structure without reassessment creates hidden gaps.

Outbound becomes dependable when logic is continuously aligned with real market conditions and clean data foundations.

When automation operates unchecked, unseen inconsistencies multiply — and predictability fades long before performance visibly declines.

Related Post:

How Engagement Timing Predicts Buying Motivation

Why Intent Data Works Only When the Inputs Are Clean

The Multi-Signal Indicators Behind Strong Reply Rates

How ICP Precision Improves Reply Rate Fast

Why Bad Data Creates False Low-Reply Signals

The Underestimated Variables Behind Reply Probability

How Data Drift Creates False Confidence in Pipeline Health

Why Incorrect ICP Fit Leads to Dead Pipeline Stages

The Drop-Off Patterns That Reveal Data Quality Problems

How Duplicate CRM Entries Kill Data Reliability

Why CRM Metadata Conflicts Corrupt Segmentation

The Lifecycle Management Mistakes That Block Deals

How Scoring Drift Creates False High-Priority Leads

Why Strong Scoring Depends on Field Completeness

The Multi-Signal Scoring Framework That Actually Works

How Inconsistent Metadata Breaks Your Segmentation Logic

Why Metadata Drift Happens Inside Large Lead Lists

The Contact-Level Clues Buried Inside Metadata Fields

How Company Expansion Alters Contact Accuracy

Why Lifecycle Drift Skews Segmentation Over Time

The Stage-Based Patterns That Predict Reply Probability

How Source Diversity Boosts Lead Accuracy at Scale

Why Multi-Source Data Requires Stricter Deduplication

The Blending Rules That Prevent Data Integrity Loss

How Bad Data Bloats Sending Volume With No Returns

Why SDR Teams Burn Out When Lead Data Is Faulty

The Compounding Waste Caused by Outdated Lead Lists

How Data Drift Disrupts Revenue Decision-Making

Why Revenue Systems Require Continuous Data Validation

The Data Signals That Reveal Structural Revenue Weakness

How Over-Automation Creates Pipeline Noise

Why SDR Judgment Beats Automation in High-Stakes Accounts

Connect

Get verified leads that drive real results for your business today.

www.capleads.org

© 2025. All rights reserved.

Serving clients worldwide.

CapLeads provides verified B2B datasets with accurate contacts and direct phone numbers. Our data helps startups and sales teams reach C-level executives in FinTech, SaaS, Consulting, and other industries.