The Multi-Signal Scoring Framework That Actually Works

Single-metric lead scoring fails in modern outbound. Learn how a multi-signal scoring framework aligns fit, intent, recency, and role accuracy.

INDUSTRY INSIGHTSLEAD QUALITY & DATA ACCURACYOUTBOUND STRATEGYB2B DATA STRATEGY

CapLeads Team

2/23/20264 min read

Most scoring models don’t fail because they lack complexity.

They fail because they mistake correlation for reliability.

A contact downloads a guide. Score increases.

A company fits your size range. Score increases.

A title contains “Director.” Score increases.

Individually, those signals feel meaningful.

Collectively — without structural balance — they create unstable prioritization.

That’s why multi-signal scoring isn’t about adding more variables.

It’s about building controlled signal tension inside the model.

Why Single-Signal Weighting Breaks Under Pressure

Single-signal dominant models tend to overweight one dimension:

Firmographic-heavy models prioritize size over timing.

Title-heavy models prioritize hierarchy over authority.

Recency-heavy models prioritize freshness over budget reality.

Each of those signals matters.

But none of them should ever operate independently.

When one signal dominates, your scoring becomes reactive rather than predictive.

That’s when you see inflated priority tiers filled with:

Highly engaged non-buyers

Perfect-fit companies with zero timing

Senior titles without budget control

Recently validated but irrelevant accounts

Multi-signal scoring fixes this by forcing signals to compete.

The Core Principle: No Signal Should Win Alone

A scoring framework that actually works applies structured friction.

A lead should not rank high unless:

It fits structurally

It aligns by role

It shows credible intent

It passes recency integrity

If one dimension is strong and the others are weak, the final score should remain constrained.

This is where most teams go wrong.

They design additive scoring systems, not conditional scoring systems.

Additive systems inflate.

Conditional systems stabilize.

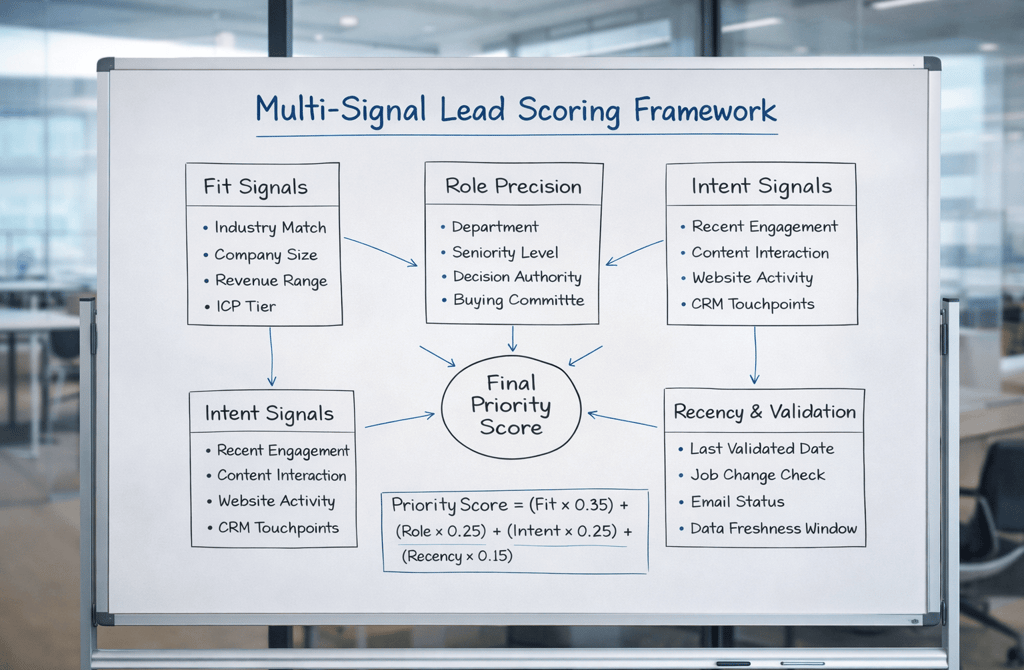

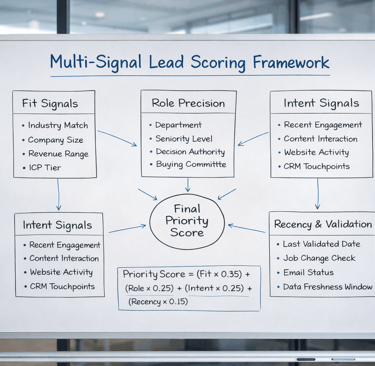

The Four-Layer Architecture That Produces Stability

A durable multi-signal scoring framework operates across four synchronized layers:

1️⃣ Structural Fit Layer

Industry match, company size, revenue band, ICP tier.

This defines economic plausibility.

In heavy-capital sectors like industrials B2B leads, structural fit matters more than engagement spikes. A small distributor downloading three whitepapers does not outweigh a properly sized manufacturing firm with aligned buying authority.

Without structural fit integrity, your model rewards noise.

2️⃣ Role & Authority Layer

Department classification, seniority, buying committee position.

Not all “Directors” are equal.

Not all “Managers” lack power.

Authority must be validated contextually — not assumed from titles alone.

If role precision is weak, prioritization becomes cosmetic.

3️⃣ Intent Layer

Recent engagement, CRM interactions, content consumption patterns.

Intent should amplify fit — not override it.

High intent without fit equals low conversion probability.

Strong fit without intent equals long-cycle nurturing.

Only when fit and intent align does urgency become real.

4️⃣ Recency & Integrity Layer

Validation date, job change checks, email status, data freshness window.

Recency prevents historical signals from distorting present opportunity.

A lead that was high-intent six months ago should not compete equally with one validated two weeks ago.

Recency acts as decay control inside the scoring model.

Without it, drift accelerates.

The Signal Balance Rule Most Teams Ignore

The strength of multi-signal scoring isn’t in weight distribution.

It’s in dependency enforcement.

For example:

Intent score above 80 should not elevate priority if role precision is below threshold.

High structural fit should not dominate if recency integrity fails.

Seniority should not boost score without department confirmation.

This creates gating logic.

Gating logic prevents inflated prioritization.

Inflation is the root cause of scoring instability.

Why Multi-Signal Models Feel “Slower” but Convert Faster

When teams shift from single-signal models to multi-signal frameworks, something strange happens:

The number of “high priority” leads decreases.

At first, this feels restrictive.

But that contraction is precision.

Your SDR queue becomes smaller — and more accurate.

Reply consistency improves.

Meeting quality improves.

Pipeline velocity stabilizes.

A scoring model that works is not one that produces more top-tier leads.

It’s one that produces fewer, stronger ones.

What Actually Makes It “Work”

A multi-signal scoring framework works when:

Every core dimension is measurable.

No signal operates unchecked.

Decay windows are enforced.

Missing fields reduce confidence.

Signal interaction is deliberate.

It’s not about stacking more data.

It’s about structuring data so that priority cannot inflate easily.

The best scoring systems are conservative by design.

They earn high priority.

They don’t hand it out.

The Real Takeaway

Scoring models don’t fail because they lack intelligence.

They fail because they lack structure.

A model built on layered, competing signals creates natural resistance to inflation.

When fit, role, intent, and recency reinforce each other, prioritization becomes stable instead of reactive.

Related Post:

Why Inconsistent Targeting Raises Spam Filter Suspicion

The Inbox Sorting Logic ESPs Never Explain Publicly

How Risky Sending Patterns Trigger Domain-Level Penalties

Why Domain Reputation Is Built on Consistency, Not Volume

The Hidden Domain Factors That Influence Inbox Placement

Why Copy Tweaks Don’t Fix Underlying Data Problems

The Hidden Data Requirements Behind High-Performing Frameworks

Why Framework Experiments Fail When Lists Aren’t Fresh

How Overly Broad Segments Lower Reply Probability

Why Weak Targeting Logic Confuses Inbox Providers

The Real Cost of Using “Catch-All” Segments in Outbound

How Weak ICP Definitions Inflate Your Pipeline With Noise

Why Buyer Fit Accuracy Matters More Than Industry Fit

The Hidden ICP Mistakes That Make Outreach Unpredictable

How Poor Data Creates Blind Spots in Committee Mapping

Why Buying Committees Prefer Consistent Messaging Across Roles

The Contact Layering Strategy Behind Multi-Threaded Sequences

How Engagement Timing Predicts Buying Motivation

Why Intent Data Works Only When the Inputs Are Clean

The Multi-Signal Indicators Behind Strong Reply Rates

How ICP Precision Improves Reply Rate Fast

Why Bad Data Creates False Low-Reply Signals

The Underestimated Variables Behind Reply Probability

How Data Drift Creates False Confidence in Pipeline Health

Why Incorrect ICP Fit Leads to Dead Pipeline Stages

The Drop-Off Patterns That Reveal Data Quality Problems

How Duplicate CRM Entries Kill Data Reliability

Why CRM Metadata Conflicts Corrupt Segmentation

The Lifecycle Management Mistakes That Block Deals

How Scoring Drift Creates False High-Priority Leads

Why Strong Scoring Depends on Field Completeness

Connect

Get verified leads that drive real results for your business today.

www.capleads.org

© 2025. All rights reserved.

Serving clients worldwide.

CapLeads provides verified B2B datasets with accurate contacts and direct phone numbers. Our data helps startups and sales teams reach C-level executives in FinTech, SaaS, Consulting, and other industries.