Why Multi-Source Data Requires Stricter Deduplication

Combining multiple data sources increases coverage — but it also multiplies duplicate risk. Here’s why stricter deduplication logic is essential when scaling multi-source lead pipelines.

INDUSTRY INSIGHTSLEAD QUALITY & DATA ACCURACYOUTBOUND STRATEGYB2B DATA STRATEGY

CapLeads Team

2/26/20263 min read

Adding more data sources feels like progress.

More coverage.

More contacts.

More enrichment layers.

But multi-source pipelines introduce a structural problem that single-source systems rarely face at scale: identity inflation.

When the same person appears three times under slightly different attributes, your database doesn’t get stronger. It gets noisier.

Multi-source systems increase accuracy potential — but only if deduplication logic becomes more sophisticated than simple email matching.

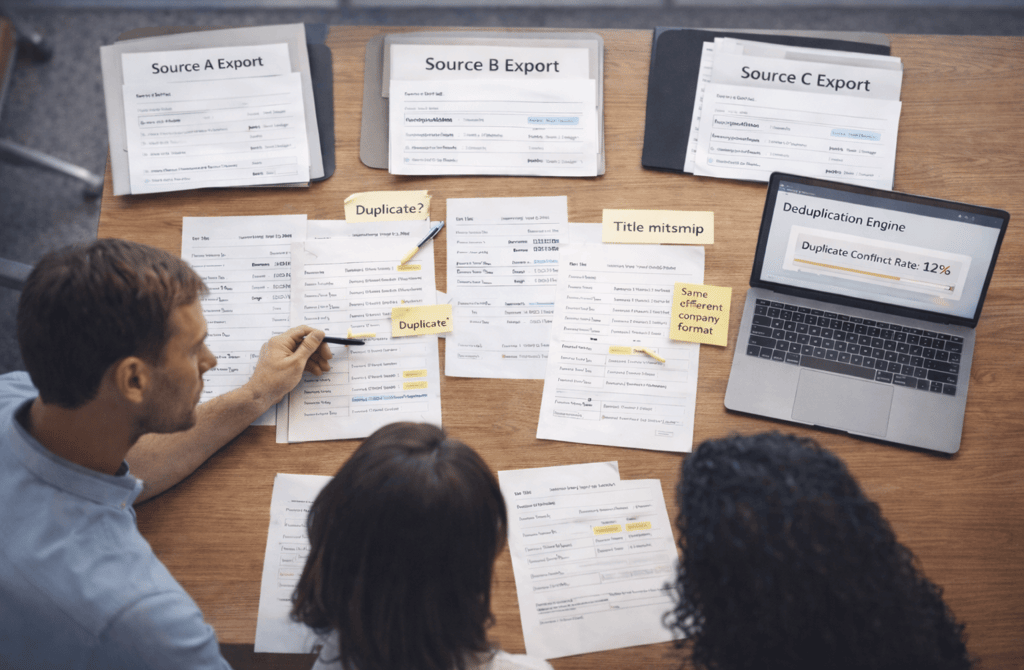

Multi-Source Doesn’t Mean Multi-Perspective — It Often Means Multi-Representation

The same contact can appear across different datasets in structurally different ways:

“VP Sales” vs “Vice President of Sales”

“Acme Inc.” vs “Acme Corporation”

With middle initial vs without

With regional office listed vs headquarters

Individually, these differences seem harmless.

At scale, they fracture identity.

If your deduplication engine relies solely on exact matches, it treats each variation as a unique record. That inflates lead volume while silently reducing targeting precision.

You don’t gain new prospects.

You gain duplicated representation of the same ones.

Email Matching Alone Is Not Enough

Many teams rely on email as the primary deduplication key.

That works — until it doesn’t.

Edge cases appear quickly in multi-source pipelines:

Same person, updated email domain

Shared inbox aliases

Personal email captured in one source, corporate email in another

Different contact emails for the same executive role

If deduplication logic stops at exact email matching, identity splits occur.

Stricter logic requires:

Name similarity scoring

Company normalization rules

Title similarity thresholds

Domain-to-company validation

Multi-source data increases the probability of identity variation. Deduplication must anticipate that variation.

The Hidden Cost of Duplicate Inflation

Duplicates don’t just clutter databases.

They distort performance metrics.

When the same contact exists in multiple segments:

Open rates inflate artificially

Reply tracking fragments

Suppression lists fail to block repeated outreach

Reporting misrepresents account penetration

In outbound campaigns targeting FinTech B2B lead segmentation, duplicated executive records can result in multiple team members contacting the same organization from different angles — believing they are engaging separate contacts.

That damages credibility.

Worse, it makes reply rate interpretation unreliable. If three records represent one person and only one receives a reply, your performance analytics misrepresent engagement probability.

Cross-Source Conflicts Create Field-Level Collisions

Multi-source integration doesn’t just duplicate identities — it creates conflicting field values.

For example:

Source A says:

Company size: 120 employees

Source B says:

Company size: 200 employees

Source C says:

Which is correct?

Without stricter deduplication rules, the system may:

Keep all three records

Merge them without resolving the conflict

Or overwrite one value arbitrarily

Each outcome affects segmentation accuracy differently.

Stricter deduplication requires conflict-resolution logic — not just record collapsing.

Scale Multiplies Collision Frequency

At small volumes, duplicate handling feels manageable.

At scale, collision frequency rises exponentially.

The more sources you integrate:

The higher the probability of overlapping coverage

The greater the variation in formatting

The more frequently edge cases appear

Multi-source pipelines are powerful — but they increase structural complexity.

Deduplication must evolve from:

“Remove exact duplicates”

to:

“Resolve probabilistic identity overlap.”

That means scoring similarity rather than relying on binary rules.

Why Tolerance Thresholds Matter

Stricter deduplication doesn’t mean aggressive deletion.

Over-merging is just as dangerous as under-merging.

If similarity thresholds are too loose:

Different people at the same company may collapse into one record.

If thresholds are too strict:

The same executive remains duplicated across segments.

Effective multi-source systems define tolerance bands:

High confidence match → auto-merge

Medium confidence → flag for review

Low confidence → maintain separate records

Without this layered logic, multi-source enrichment undermines itself.

Deduplication Is a Structural Layer — Not a Cleanup Step

Many teams treat deduplication as a final cleanup process after importing leads.

In multi-source systems, it must be embedded into ingestion logic itself.

Every new batch should be evaluated against:

Existing identity graph

Company normalization table

Title standardization dictionary

Domain validation logic

Deduplication becomes continuous, not periodic.

The more diverse your sources, the more dynamic your identity map must be.

What This Means

Multi-source data increases potential accuracy — but it also increases identity collision risk.

Without stricter deduplication logic, expanded coverage turns into inflated volume and distorted metrics.

When identity resolution scales with source diversity, accuracy compounds.

When deduplication lags behind integration, duplicates silently weaken segmentation precision.

Related Post:

Why Weak Targeting Logic Confuses Inbox Providers

The Real Cost of Using “Catch-All” Segments in Outbound

How Weak ICP Definitions Inflate Your Pipeline With Noise

Why Buyer Fit Accuracy Matters More Than Industry Fit

The Hidden ICP Mistakes That Make Outreach Unpredictable

How Poor Data Creates Blind Spots in Committee Mapping

Why Buying Committees Prefer Consistent Messaging Across Roles

The Contact Layering Strategy Behind Multi-Threaded Sequences

How Engagement Timing Predicts Buying Motivation

Why Intent Data Works Only When the Inputs Are Clean

The Multi-Signal Indicators Behind Strong Reply Rates

How ICP Precision Improves Reply Rate Fast

Why Bad Data Creates False Low-Reply Signals

The Underestimated Variables Behind Reply Probability

How Data Drift Creates False Confidence in Pipeline Health

Why Incorrect ICP Fit Leads to Dead Pipeline Stages

The Drop-Off Patterns That Reveal Data Quality Problems

How Duplicate CRM Entries Kill Data Reliability

Why CRM Metadata Conflicts Corrupt Segmentation

The Lifecycle Management Mistakes That Block Deals

How Scoring Drift Creates False High-Priority Leads

Why Strong Scoring Depends on Field Completeness

The Multi-Signal Scoring Framework That Actually Works

How Inconsistent Metadata Breaks Your Segmentation Logic

Why Metadata Drift Happens Inside Large Lead Lists

The Contact-Level Clues Buried Inside Metadata Fields

How Company Expansion Alters Contact Accuracy

Why Lifecycle Drift Skews Segmentation Over Time

The Stage-Based Patterns That Predict Reply Probability

How Source Diversity Boosts Lead Accuracy at Scale

Connect

Get verified leads that drive real results for your business today.

www.capleads.org

© 2025. All rights reserved.

Serving clients worldwide.

CapLeads provides verified B2B datasets with accurate contacts and direct phone numbers. Our data helps startups and sales teams reach C-level executives in FinTech, SaaS, Consulting, and other industries.