How Scoring Drift Creates False High-Priority Leads

Lead scoring drift quietly inflates priority accounts with outdated or incomplete data. Learn how scoring misalignment damages pipeline accuracy and outbound ROI.

INDUSTRY INSIGHTSLEAD QUALITY & DATA ACCURACYOUTBOUND STRATEGYB2B DATA STRATEGY

CapLeads Team

2/23/20264 min read

A lead score can look perfectly healthy while the underlying data quietly rots.

That’s the danger of scoring drift. Not a broken scoring model. Not a misconfigured CRM. But a gradual shift between what your scoring system thinks signals quality and what your market is actually doing.

Over time, those small mismatches inflate the wrong accounts — and your team ends up chasing “priority” leads that were never truly high-fit in the first place.

Let’s break down how this happens.

What Scoring Drift Actually Is

Lead scoring models are built on weighted variables:

Company size

Role seniority

Engagement signals

Recency

Lifecycle stage

At launch, the model reflects reality. Your ICP is accurate. Your firmographic assumptions are correct. Your engagement weights make sense.

But markets move.

Titles evolve. Departments restructure. Companies change hiring velocity. Industries shift maturity levels. Recency windows stretch.

When those shifts aren’t recalibrated inside your scoring logic, the model begins overvaluing outdated signals.

That’s scoring drift.

The model still runs. The numbers still calculate. But the inputs no longer reflect current buying behavior.

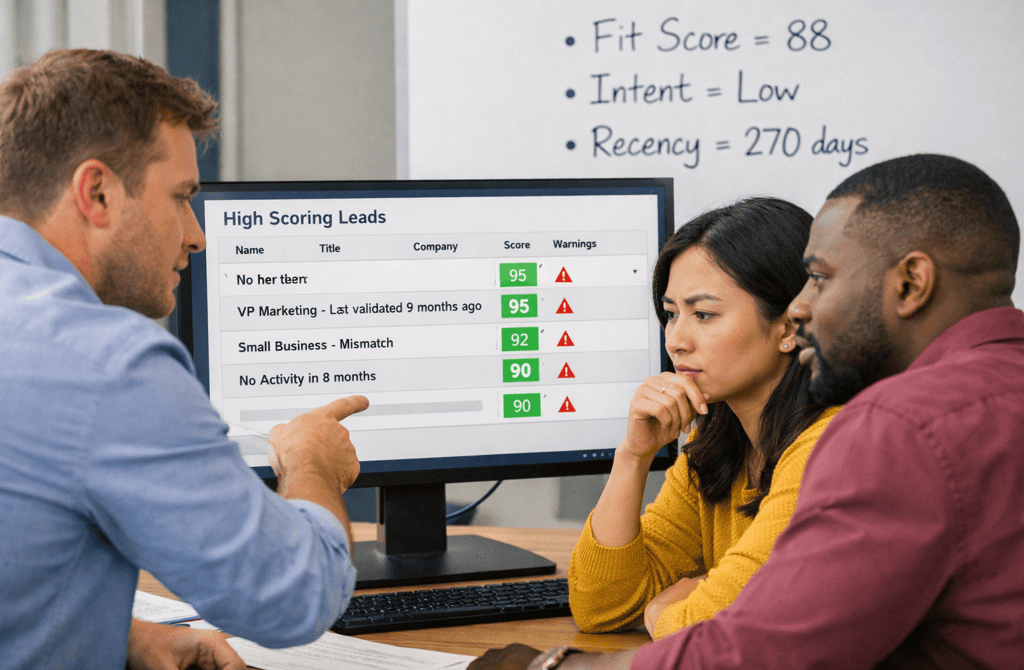

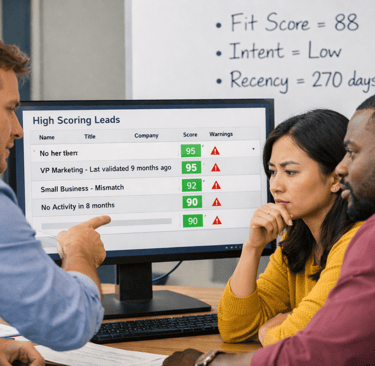

How False High-Priority Leads Get Created

Scoring drift usually inflates leads in three ways:

1️⃣ Outdated Firmographic Weighting

Let’s say your model heavily weights mid-market SaaS companies with 100–300 employees.

Six months later, your strongest reply velocity actually comes from leaner 40–80 person teams. But your score still favors the larger bracket.

Now your “top priority” list is misaligned — and your real buyers are sitting below the threshold.

2️⃣ Recency Decay Isn’t Aggressive Enough

Engagement from 120 days ago still carries weight in the score.

In fast-moving service sectors like consulting industry B2B leads, role changes and client-cycle turnover can make engagement signals expire far sooner than most scoring models assume.

Your model thinks the account is warm.

The market has already moved on.

3️⃣ Title Inflation and Role Drift

Titles change faster than scoring systems adapt.

“Head of Growth” meant decision-maker last year.

Now it’s often a mid-level operator.

If your scoring model doesn’t adjust for role-level evolution, it over-prioritizes contacts who no longer carry buying authority.

That’s how false priority accounts rise to the top.

The Hidden Damage Inside the Pipeline

Scoring drift doesn’t immediately tank performance. That’s why it’s dangerous.

Instead, it creates slow distortions:

SDRs spend time on “qualified” leads that stall

True high-intent accounts get delayed follow-up

Reply velocity drops without obvious cause

Pipeline stages inflate with low-conversion deals

From a reporting standpoint, everything looks stable.

But conversion efficiency erodes.

The model keeps telling you where to focus — and it’s quietly wrong.

Why Drift Happens Faster Than Teams Expect

There are three structural reasons scoring drift accelerates:

1. ICP Evolution

Your best-fit customer profile shifts as your positioning matures. If scoring weights aren’t updated quarterly, your priority logic freezes in time.

2. Industry Velocity Differences

Fast-moving sectors (SaaS, cybersecurity, logistics) experience role and company movement much faster than traditional industries. Score recency windows must reflect that decay speed.

3. Data Aging Inside the CRM

Even if new leads are clean, historical records accumulate metadata conflicts. If old engagement still influences scoring, stale signals overpower fresh intent.

Scoring drift is rarely about bad math.

It’s about outdated assumptions.

How to Detect Scoring Drift Early

There are early-warning indicators:

High-score accounts consistently reply at lower rates than mid-score segments

“Top priority” leads show longer response cycles

Sales reports stalled deals from high-fit tiers

Reply velocity improves when you manually override scoring

When manual judgment outperforms automated prioritization, drift is already active.

Correcting Scoring Drift Before It Spreads

Scoring models should not be static frameworks. They require periodic recalibration:

Re-evaluate firmographic weightings quarterly

Tighten recency decay curves for high-churn sectors

Audit title-level authority shifts

Reduce engagement influence from aged activity

Compare reply velocity across score bands

Most importantly:

Track conversion rate by score tier.

If your 60–70 range outperforms your 85–95 tier, your scoring logic is no longer aligned with buying reality.

System Implication

Lead scoring is supposed to create clarity.

When drift sets in, it creates illusion.

False high-priority leads are not just inefficiencies. They distort resource allocation, delay true buyers, and weaken outbound predictability over time.

Prioritization only works when the data underneath reflects today’s market behavior — not last quarter’s assumptions.

When scoring logic drifts, your team moves with confidence in the wrong direction.

When scoring stays aligned with fresh, accurate signals, priority actually means something again.

Related Post:

Why Inconsistent Targeting Raises Spam Filter Suspicion

The Inbox Sorting Logic ESPs Never Explain Publicly

How Risky Sending Patterns Trigger Domain-Level Penalties

Why Domain Reputation Is Built on Consistency, Not Volume

The Hidden Domain Factors That Influence Inbox Placement

Why Copy Tweaks Don’t Fix Underlying Data Problems

The Hidden Data Requirements Behind High-Performing Frameworks

Why Framework Experiments Fail When Lists Aren’t Fresh

How Overly Broad Segments Lower Reply Probability

Why Weak Targeting Logic Confuses Inbox Providers

The Real Cost of Using “Catch-All” Segments in Outbound

How Weak ICP Definitions Inflate Your Pipeline With Noise

Why Buyer Fit Accuracy Matters More Than Industry Fit

The Hidden ICP Mistakes That Make Outreach Unpredictable

How Poor Data Creates Blind Spots in Committee Mapping

Why Buying Committees Prefer Consistent Messaging Across Roles

The Contact Layering Strategy Behind Multi-Threaded Sequences

How Engagement Timing Predicts Buying Motivation

Why Intent Data Works Only When the Inputs Are Clean

The Multi-Signal Indicators Behind Strong Reply Rates

How ICP Precision Improves Reply Rate Fast

Why Bad Data Creates False Low-Reply Signals

The Underestimated Variables Behind Reply Probability

How Data Drift Creates False Confidence in Pipeline Health

Why Incorrect ICP Fit Leads to Dead Pipeline Stages

The Drop-Off Patterns That Reveal Data Quality Problems

How Duplicate CRM Entries Kill Data Reliability

Why CRM Metadata Conflicts Corrupt Segmentation

The Lifecycle Management Mistakes That Block Deals

Connect

Get verified leads that drive real results for your business today.

www.capleads.org

© 2025. All rights reserved.

Serving clients worldwide.

CapLeads provides verified B2B datasets with accurate contacts and direct phone numbers. Our data helps startups and sales teams reach C-level executives in FinTech, SaaS, Consulting, and other industries.