How B2B Teams Compare Lead Generation Channels in Practice

See how B2B teams compare lead generation channels in practice—based on real performance, data quality, and decision-making patterns, not surface-level pros and cons.

INDUSTRY INSIGHTSLEAD QUALITY & DATA ACCURACYOUTBOUND STRATEGYB2B DATA STRATEGY

CapLeads Team

3/24/20264 min read

Channel comparisons rarely start as structured decisions.

They usually begin after something stops working.

A campaign slows down. Replies become inconsistent. Pipeline feels uneven. And instead of asking which channel is “best,” teams start asking a more practical question:

why did this channel behave differently this time?

That’s where real comparisons begin—not from theory, but from disruption.

Comparison Doesn’t Happen Upfront

Most teams don’t sit down and logically compare channels before running them.

They start with one:

cold email because it scales

LinkedIn because it feels safer

calls because they want faster feedback

Only after running it for a while do they realize:

results fluctuate

performance doesn’t stay consistent

So comparison isn’t an initial decision.

It’s something that emerges after friction.

What Teams Actually Compare (But Don’t Say Out Loud)

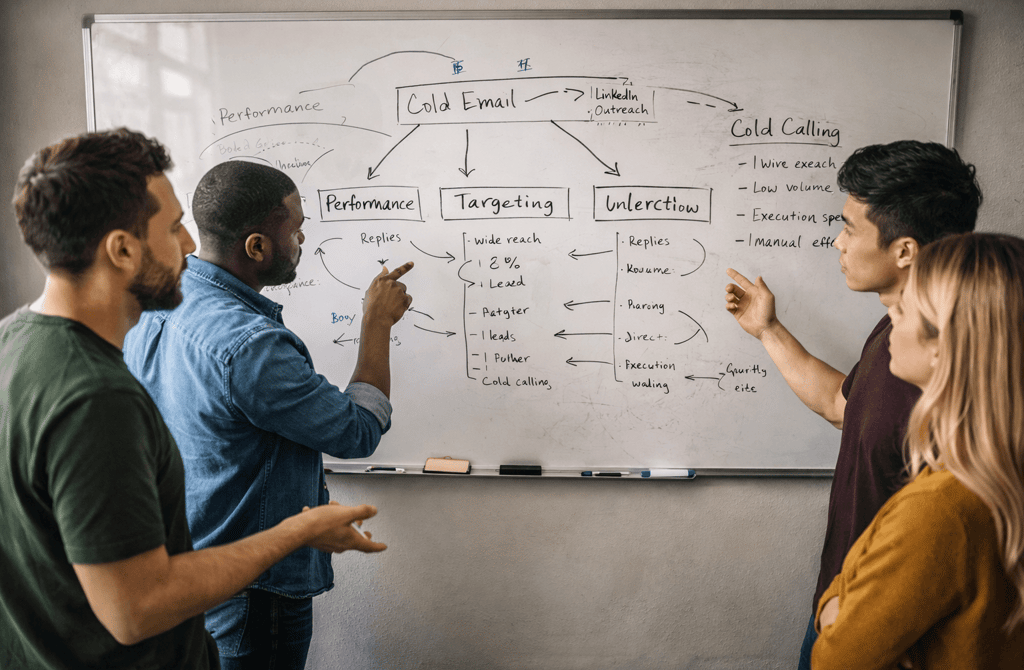

When teams look at different channels, they don’t just compare:

reply rates

conversion rates

Those are surface-level.

What they really compare is:

how stable results are over time

how sensitive the channel is to data quality

how quickly performance drops when something shifts

For example, email might look strong initially—but once the data starts aging, performance becomes unpredictable.

LinkedIn may feel slower—but holds consistency longer because signals don’t decay the same way.

Calls can generate immediate feedback—but only if the contact data is precise enough to reach the right person.

So the comparison isn’t:

which channel performs better

It’s:

which channel holds up under pressure

The Role of Data Changes the Comparison

This is where most comparisons break down.

Teams assume they are comparing channels.

But they’re actually comparing:

different targeting quality

different levels of accuracy

So the conclusion becomes flawed.

In environments where the underlying data reflects how roles actually operate inside companies—like industrial sector lead datasets where responsibilities are tied closely to production, procurement, or operations instead of generic titles—channels behave very differently. Outreach becomes less about guessing who to contact and more about reaching someone who is already positioned within the decision flow.

That shifts the entire comparison:

email becomes more stable

calls become more relevant

LinkedIn becomes more targeted

Not because the channels changed—but because the input did.

Why Teams Change Their Channel Preference Over Time

Early on, teams often prefer:

email for scale

LinkedIn for safety

calls for speed

But as they gain experience, preferences shift.

Not randomly—but based on what they’ve seen fail.

Teams burned by high bounce rates start distrusting email

Teams frustrated with low LinkedIn replies reduce reliance on it

Teams struggling with call efficiency start narrowing segments

So channel preference is rarely strategic.

It’s shaped by:

past failure patterns

Comparison Becomes a Filtering Process

Over time, teams stop comparing channels broadly.

Instead, they begin filtering:

which channel works for this segment

which channel holds up for this type of data

which channel fails fastest under pressure

This leads to a different kind of comparison:

not channel vs channel

but channel vs context

For example:

email might work best for structured, validated segments

LinkedIn might perform better when intent signals are visible

calls might be reserved for high-confidence targets

The comparison becomes situational, not universal.

Where Most Teams Get It Wrong

The biggest mistake isn’t choosing the wrong channel.

It’s assuming the comparison is static.

Teams often:

decide one channel is “better”

double down

ignore changes in data or targeting

Then when performance drops, they switch channels entirely—without understanding why the first one failed.

So instead of learning:

how channels behave

They cycle through them.

What This Means

Channel comparison in B2B isn’t about ranking options.

It’s about understanding behavior under changing conditions.

Teams that get this right don’t ask:

which channel is best

They ask:

what conditions make this channel work

what signals show it’s starting to fail

how does it respond to changes in data and targeting

Once comparison becomes contextual, decisions become more stable.

Outbound doesn’t improve because you picked the “right” channel. It improves because you understand when each channel holds—and when it breaks.

Consistent pipeline doesn’t come from switching channels. It comes from recognizing the conditions that make each one perform.

When your inputs stay aligned, channels behave predictably. When they drift, even the best-performing channel will collapse without warning.

Related Post:

The Infrastructure Fragility Hidden in Cheap Lead Lists

How Data Drift Creates Bounce Surges Over Time

Why Even “Valid” Emails Can Bounce If Recency Is Off

Why Most Companies Discover Data Drift Only After It Hurts Revenue

The Structural Problems That Arise When Data Is Left Unmaintained

How Contact Aging Creates Metadata Conflicts in Your CRM

Why Missing Metadata Lowers the Accuracy of Your Filters

The Enrichment Framework Behind High-Performing Outbound

How Company Size Errors Create Misleading Pipelines

How Manual Review Prevents Domain Reputation Damage

The Validation Conflicts You Only Notice With Human Eyes

Why Automated Systems Misjudge Role-Based Emails

Why Sending to Spam Traps is Worse Than Hard Bounces

The Duplicate Clusters That Break Your Segmentation Flow

How Compromised Emails Drag Your Deliverability Down

The Vertical-Specific Risks Cheap Providers Ignore

How Industry Growth Rates Alter Lead Accuracy

Why Some Industries Generate More Role-Based Emails

The Hidden Errors Found in Multi-Site Organizations

How Company Data Drift Skews Account Prioritization

Why Revenue Accuracy Determines High-Intent Segments

How Role-Based Targeting Improves Deliverability

Why Department-Level Accuracy Is Non-Negotiable

The Title Signals That Reveal True Decision-Makers

How Bad Routing Logic Causes Deliverability Decline

Why Warming a Domain Isn’t Enough Without Proper Architecture

The Structural Email Errors Hidden in Most Outbound Systems

How Spam Filters Detect Risky Lead Quality Automatically

Why Low-Intent Lists Train Inbox Providers Against You

The Invisible Engagement Thresholds Behind Primary Placement

How ESPs Score the Long-Term Health of Your Domain

Why Reputation Damage Compounds Faster Than You Can Recover

The Risk Indicators That Reveal Whether Your Domain Is “Safe”

Which B2B Lead Generation Ideas Actually Deliver Results Today

How Companies Approach B2B Lead Generation

Connect

Get verified leads that drive real results for your business today.

www.capleads.org

© 2025. All rights reserved.

Serving clients worldwide.

CapLeads provides verified B2B datasets with accurate contacts and direct phone numbers. Our data helps startups and sales teams reach C-level executives in FinTech, SaaS, Consulting, and other industries.